Machine learning: Study from BGSU doctoral student and professor finds AI capable of blurring lines in the art world

Estimated Reading Time:

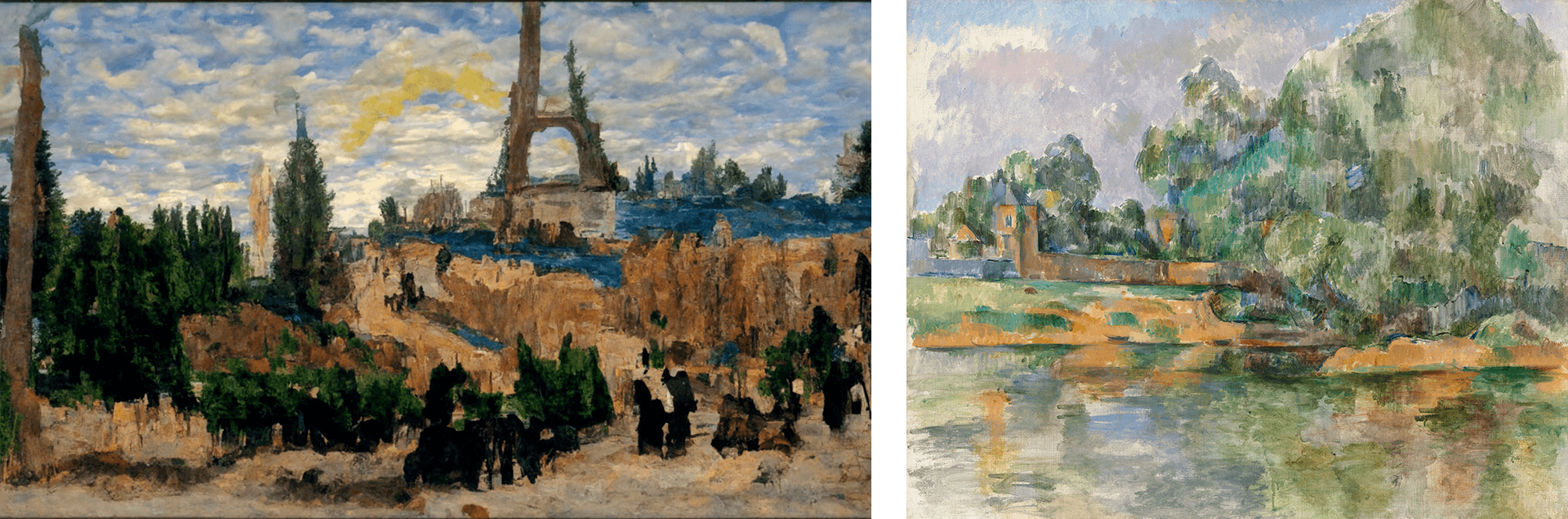

As generative AI models improve, BGSU researchers found that people struggle to tell the difference between AI and human art

What does it mean to be human?

Many would contend the ability to create and appreciate art is uniquely human, but fascinating new research from a Bowling Green State University doctoral student and professor demonstrates how generative artificial intelligence can blur the lines when it comes to identifying the source of images, but found humans still maintain a subsurface preference for genuine human art.

Andrew Samo, a doctoral candidate studying industrial and organizational psychology at BGSU, published research along with Distinguished Research Professor Dr. Scott Highhouse on AI versus human artwork in the American Psychological Association journal Psychology of Aesthetics, Creativity, and the Arts, which found that people generally can’t tell the difference between AI and human art, but they prefer the latter — even if they can’t explain why.

“Art was thought to be uniquely human because it gives off a feeling or communicates some idea about the human experience that machines don’t have,” Samo said. “In some ways, it’s to be expected people felt more strongly about human-made art.

“But at the same time, it was surprising: How can people feel so differently about one, but not be able to cognitively explain why?”

Building from the past

Prior research had found that humans tend to show bias against AI artwork, but as new, generative AI models continued to improve, Samo and Highhouse wondered if people would be able to tell the difference between AI art and human art without prodding.

To answer their question — and eliminate bias — participants were not told that some of the art they would view would be made by AI. Instead, they were only told they would be viewing a series of pictures and rating them on 30-50 aesthetic judgment factors, a reliable, psychometrics-rooted method of quantifying artistic emotions and experiences.

“Previous research demonstrated that people are biased against art if they know it was made by AI, and they’ll say they don’t like it as much,” Samo said. “But no one had really looked at this new AI art without any kind of deception. I thought, ‘If we just show people these images, would they even know which is made by humans and which is made by AI? And if they do know which one is which, how do we know what features distinguish them?"

What they found showed the capability of generative AI: Participants correctly identified the source of the artwork only slightly more than half the time, and even so, were not confident that their guesses were correct.

“It’s really a coin flip — when you show them the pictures, there’s about a 50-60% chance they’ll get it right,” Samo said. “Generally, people don’t know which is which, and when we asked how confident they were, they were typically saying they were only 50% confident.”

An unexplainable feeling

The struggle in differentiating between originators of artwork came with another interesting finding: People prefer human artwork, even if they aren’t totally sure why.

After reviewing data, Samo and Highhouse found there were clear differences in how people felt about human artwork versus AI artwork.

Even though participants were not confident in their identification of the source, they consistently felt more positively about human-generated art.

“They typically didn’t know the difference and admitted they couldn’t tell the difference once you asked them, but the next layer of that is people reliably said they liked the human images more without even knowing whether it was AI or not,” Samo said. “We found people have more positive emotions when looking at the human paintings, which makes sense.”

Out of all the aesthetic judgment factors, four accounted for the majority of the variance. Human-made art scored higher in self-reflection, attraction, nostalgia and amusement, a sign that people felt more connected to human art.

But when asked why participants felt that way, they couldn’t explain it. One interpretation is that their snap judgments connected with human art, but their analytical processing couldn’t quite articulate why they felt that way.

A theory the researchers discuss in the paper is the possibility that the brain picks up on tiny differences in art created by AI.

“One possible explanation could be the uncanny valley effect — something that is trying to look human — but there are these micro-perceptions that are slightly off,” Samo said. “Everything looks good holistically, but there are these small details in the visuals or creative narratives that your subconscious is picking up on the rest of you isn’t.”

The next wave

While AI was once believed to be able to replicate only certain tasks like those on an assembly line, for instance, generative models have shown the capacity to do much, much more.

Samo and Highhouse’s research is a glimpse into the possibilities of generative AI.

“For the longest time, AI was thought to be able to automate line work, data management, or anything else that’s very repetitive, routine, or non-original,” Samo said. “But with generative AI models, they’re able to not only do those repetitive tasks but come up with art, music, poetry, prose, text that is almost indistinguishable from humans. And this raises exciting possibilities for applications of generative AI.”

In the short time since Samo and Highhouse collected data, generative AI models have continued to improve and become more widely available.

As AI evolves, Samo said it’s important to continue to understand the psychological effects and human impacts of AI as models become more powerful and used in everyday life.

“Some of these new models can generate images that are really high quality and high fidelity toward the actual world, so it’d be interesting to run this study again,” Samo said. “If you redid this, I’m not sure if people would be able to tell the differences at all.”

Related Stories

Media Contact | Michael Bratton | mbratto@bgsu.edu | 419-372-6349

Updated: 02/13/2025 12:47PM